The Assessment

AI-Powered, comprehensive and user-friendly English proficiency assessment that tests all four language skills.

Book a demo

Tailored and adaptive

Tailored to each test-taker's skill level, the assessment first gives test-takers a short benchmarking exercise, consisting of multiple-choice questions, to assess their skill level.

Then, it adapts the reading and listening tasks, and the open-ended questions, to assess test-takers’ language skills in more detail and to challenge test-takers appropriately.

The result is an accurate assessment of test-takers’ language proficiency.

Each skill test is about 10 minutes long, but the actual overall duration of the assessment depends on the skills tested and can be customized.

The four-skills test

A comprehensive assessment of test-takers' real English communicative skills.

Book a demo »Benchmarking exercise

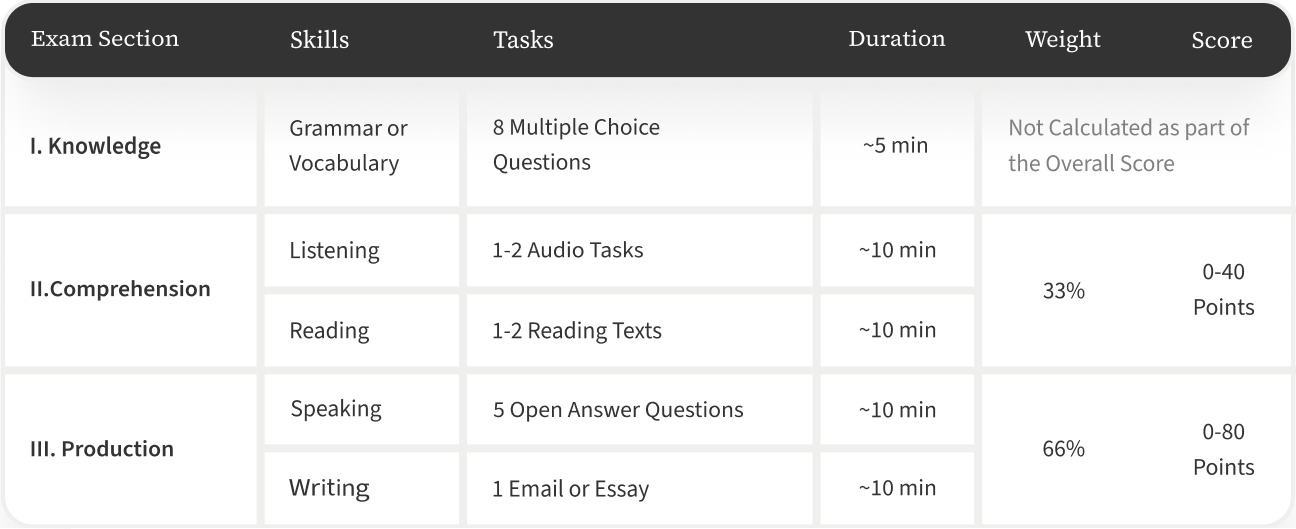

This is an initial, multiple-choice test, which consists of approximately eight questions that assess grammar and vocabulary knowledge. This test serves as an initial calibration for the rest of the test and is internally adaptive.

As test-takers answer questions correctly, they receive higher level questions. As they make mistakes, they move down to lower levels.

Listening

The listening comprehension test includes 1-2 audio clips of 30-60 seconds each, and 3-10 multiple choice questions.

The test adapts the difficulty of the questions based on the test-taker's performance.

The topics of the audio clips can be customized according to your needs.

Reading

The reading comprehension test involves reading 1-2 texts and then answering 3-10 multiple choice questions.

Like the listening test, the reading test also adapts the difficulty of the tasks based on the test-taker's performance on the initial task. The materials are adapted from authentic texts as much as possible and can be customized to content relevant to the testing context.

Speaking

The speaking test consists of 5 open-ended questions that assess the test-taker's fluency, pronunciation, vocabulary, and grammar skills in a variety of areas.

There are two levels of questions covering domains including personal life, academic, public and work . The lower-level questions are more concrete and use lower-level vocabulary than the higher-level questions. The level of the questions that the test-takers receive is not determinative of the final score. Test0takers can score at any level, regardless of the level of questions they receive.

Speaking tasks can be customized to specific professional or academic topics and competencies.

Writing

The writing test consists of a professional style email for employees, relating to either a general workforce topic or a particular subject area, depending on your needs and requirements. For students, the test consists of either an email or an essay task.

The level of the email task is determined by earlier performance. The essay writing task is an integrated task that requires an essay response to the reading task with the highest number of correct answers.

The writing is scored based on topic development (completeness), organization, style (appropriateness), grammar, vocabulary, and spelling and punctuation (mechanics).

This test assesses the test-taker's ability to write effectively in a real-world context.

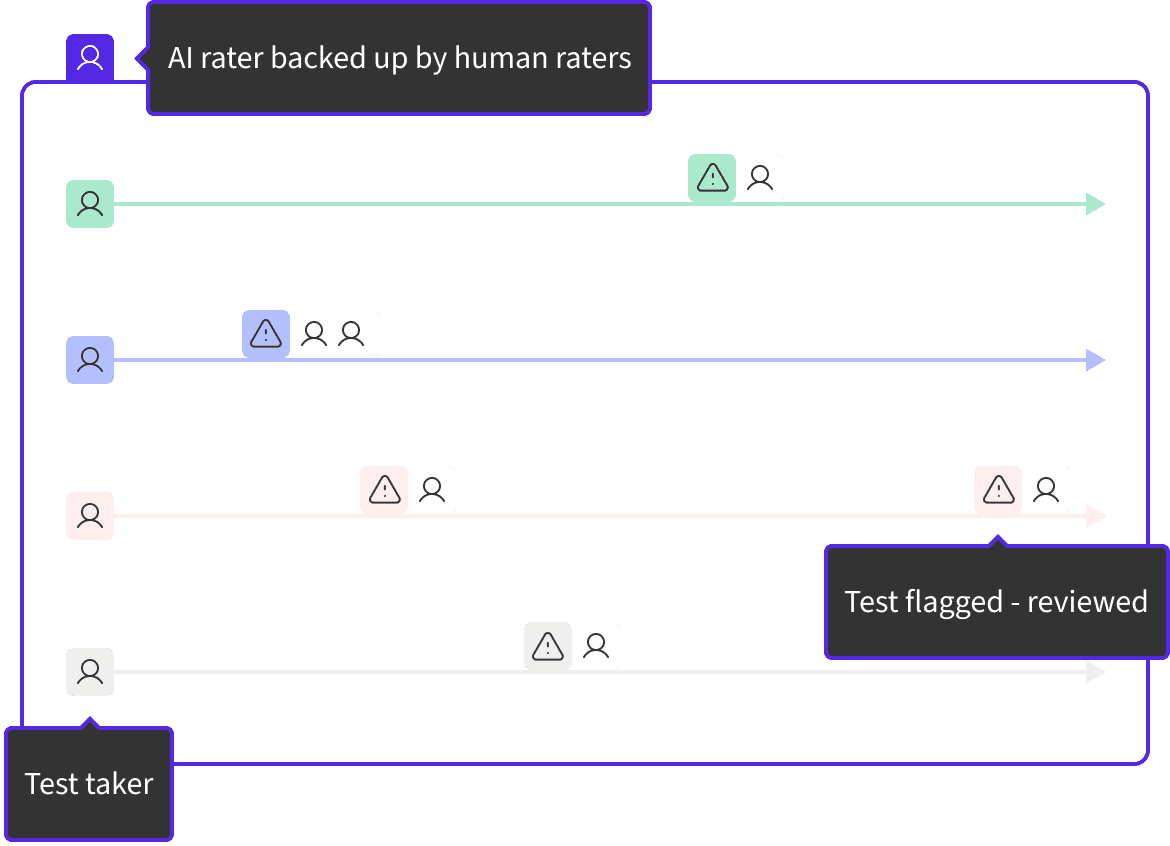

AI and human grading

The assessment is scored by an AI rater and backed up by an expert team of human raters.

These raters check a percentage of the AI-scored tests to constantly improve the accuracy of the AI algorithms. This ensures the benefits of AI and the security of human rating for every test result.

In case a test is flagged for cheating by the AI algorithm, it is reviewed by at least one human rater to ensure the results are fair.

CEFR alignment

The CEFR (Common European Framework of Reference for Languages) is an internationally recognized standard for assessing language proficiency as a set of communicative skills.

Our assessment has been aligned with the CEFR from its inception, with a focus on communicative language assessment. The structure of the questions is intended to elicit the test-taker's practical proficiency skills, the rating rubrics used to grade each assessment are aligned with the CEFR, and the AI rater measures these same proficiencies.

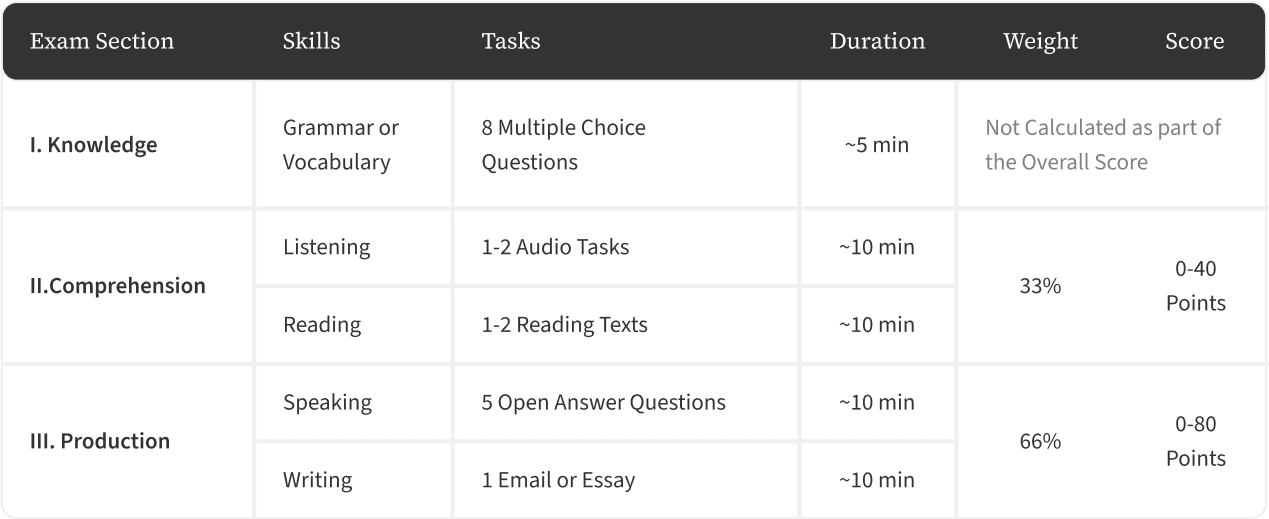

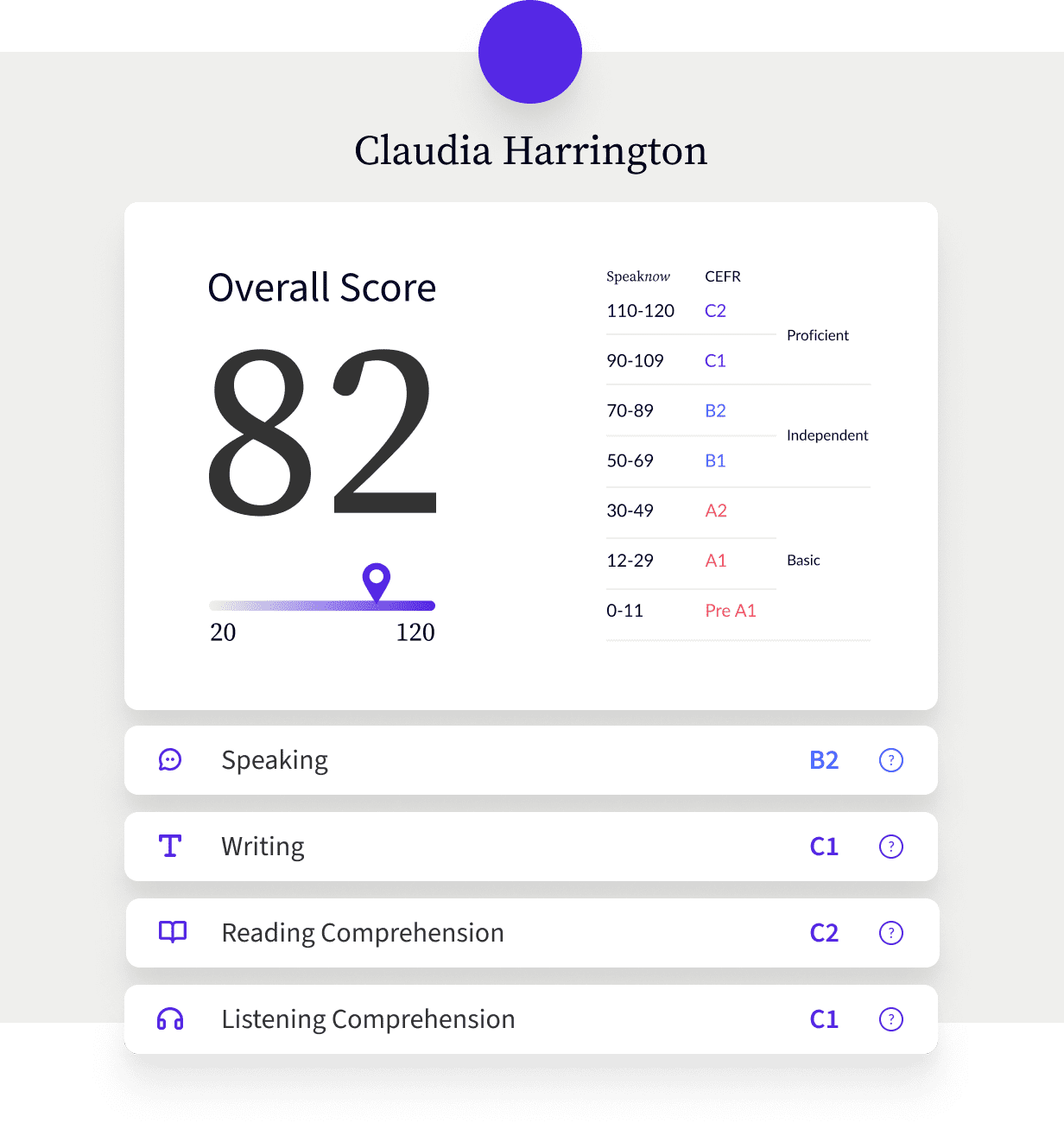

Grading system

Based on the Can Do Statements of the CEFR Rubrics, a specific CEFR level is assigned to each test item.

In addition to the CEFR level provided for test items, each test-taker receives an overall score. This score is numeric and is derived from a weighted combination of the subtests.

- Productive skills, namely speaking and writing, account for 80 points, which is two-thirds of the overall score.

- Receptive skills, namely listening and reading, account for 40 points, which is one-third of the overall score.

Detailed score report

The score report is divided by both general skills and sub-skills of production (speaking and writing), giving insights into small parameters of language proficiency.

Test takers and administrators receive detailed information about language proficiency, which offers a clear understanding of what test-takers can achieve at each skill level.

Administrators receive not only scores, but also a detailed breakdown of proficiencies of various sub-skills. This provides a more detailed understanding of which areas need better specified teaching, training and skill development.

Customized benchmarking

Our expert pedagogical team can help you understand the score levels needed for your specific goals and needs and set tailored benchmarks.

Often, test administrators know they need their students, employees, or program participants to have ‘good English,’ but they can't put their finger on what that means. Benchmarking is a process of analyzing requirements and aligning them with the CEFR so that the scores can be interpreted.

Simply put, customized benchmarking defines a set of capabilities, offering test administrators a precise metric for their specific definition of ‘good English’.